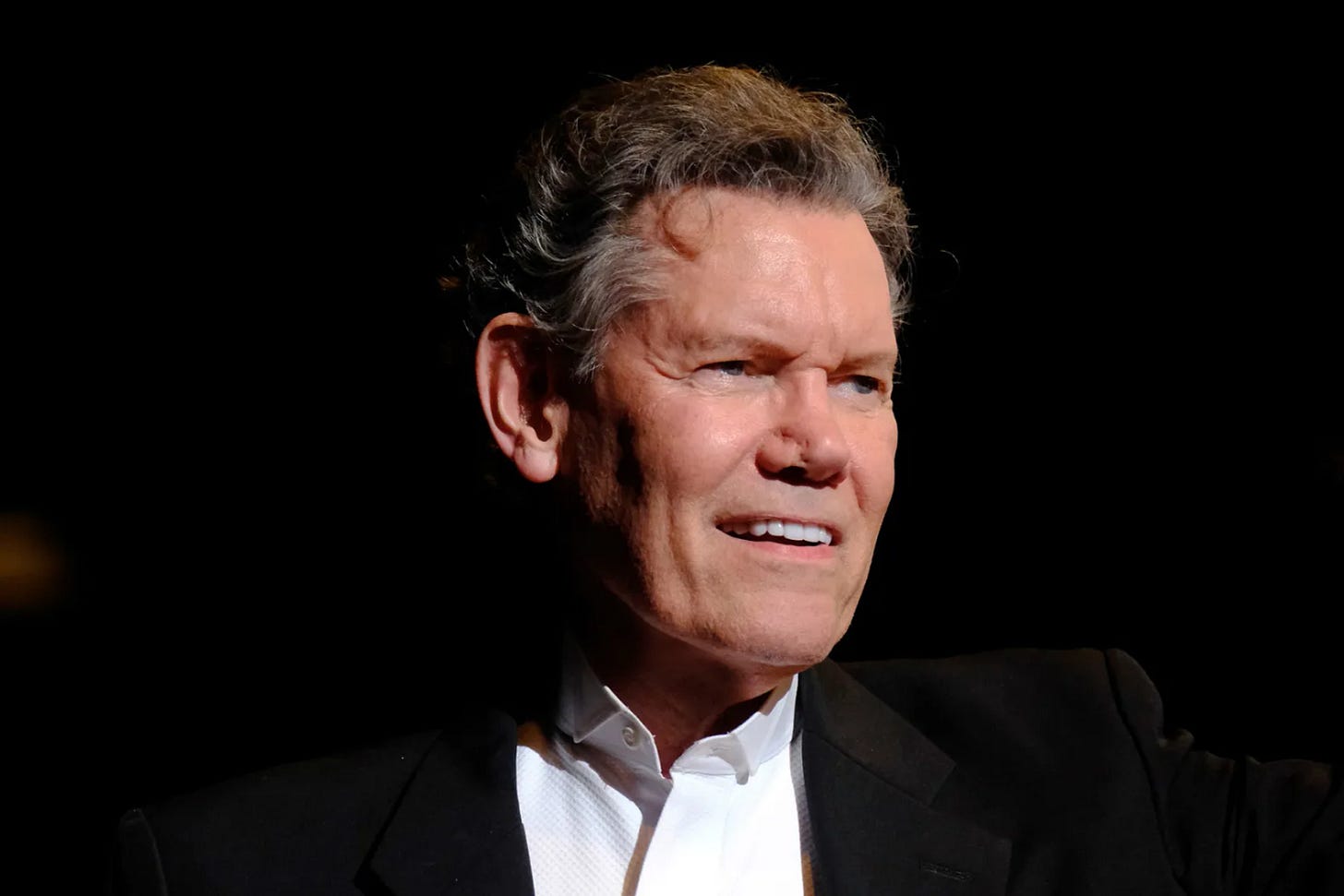

Warner Music's AI Project (ft. Randy Travis)

This Week's AI Advancements: Randy Travis Gets His Voice Back with AI Music Experiment, Microsoft Bans Police Facial Recognition Technology Usage, Data Licensing Deals you Should Know & a Speed Round.

Video briefing:

Advancement #1: Randy Travis Gets His Voice Back Through Warner AI Music Experiment

Country music legend Randy Travis, who lost his ability to speak and sing due to a stroke in 2013, released his first song in over a decade called "Where That Came From." Warner used an unknown custom model, 42 of Travis’ vocal-isolated recordings, and a surrogate singer to achieve the result.

The project, endorsed by Warner Music Nashville, showcases an example of what the company sees as "AI for good"—using technological advancements for human-first outcomes such as giving a voice back to an artist who can no longer perform.

Naturally, this raises concerns about use of an artist’s identity and utilizing AI to copy artist’s voices without permission, but a new law was recently passed in Tennessee called the ELVIS Act targeting this. Enforceable as of July 1, the ELVIS Act aims to protect artists by letting labels take legal action if anyone uses AI to mimic their voices without getting permission first. The ELVIS Act (in addition to reference of the late Elvis Presley), stands for Ensuring Likeness Voice and Image Security Act.

My Initial Thoughts: This is certainly such a feel good story in a landscape that is often plagued with fear.

I was ex-Sony Music copyright team working in New York. When I talk about the music copyright debate with AI, I have always, always stated that I believe an artist's likeness and style will now become copyrightable. With the ELVIS act in Tennessee, my claims have officially become validated.

On the other hand, this technology could also pave the way for large music labels such as Warner to digitally reviving famous, deceased artists from its extensive music catalogs and unlock new avenues for profit. Not only is this not federal legislation yet, but Tennessee did not recognize publicity rights after an artist had passed away. Current copyright law is life of the artist + 70 years, and the point of the current copyright protection term is to allow an artist’s family or copyright owner to benefit their life’s work.

So as soon as an artist dies, is their likeness now fair game, and no longer protected after death? It really seems to undermine the point of protecting works for 70 years.

One last thing… are we going to start to cross into territory where an artist’s likeness can now be sold and owned by companies? It’s an interesting time with a lot of questions, that’s for sure.

Advancement #2: Microsoft Bans US Law Enforcement from Using Azure AI/ML Suite for Facial Recognition

Microsoft has recently updated its terms of service to prevent U.S. police departments from using its Azure OpenAI Service for facial recognition purposes. This change follows recent industry developments where companies like Axon have integrated AI technologies claiming use of OpenAI's GPT-4 to summarize body camera audio.

This decision explicitly restricts the application of generative AI technologies, including current and potential future image-analyzing models, for facial recognition by law enforcement in the U.S, acknowledging the risk of usage in high-stakes environments like law enforcement where biases and hallucinations can have significant repercussions. Additionally, the updated policy extends globally to ban the use of real-time facial recognition technologies on mobile devices such as body cameras and dashcams by any law enforcement agency.

While the restrictions are specific to the U.S., they do not apply to international police forces or to facial recognition using stationary cameras in controlled settings within the U.S.

Despite these updates, OpenAI has announced this year formal collaborations with the Pentagon and Microsoft launched its Azure OpenAI Service for Government (which has Fed-RAMP approval).

My Initial Thoughts: While AI has been a staple in government technology development for years (I was in a project starting in 2019 that leveraged multiple different types such as JPL), this move appears to primarily target usage of AI technologies by government contractors, such as Leidos or Booz Allen, or any company developing technology that can be used in government situation.

In simpler terms: Government contractors are banned from utilizing OpenAI for many government applications, BUT…. direct collaborations between companies like OpenAI and governmental bodies, such as the Pentagon, remain allowed.

Ultimately companies like Microsoft are ensuring direct oversight of the implementation of these technologies AND kind of giving themselves a competitive advantage a bit.

What they’re basically saying? “We can use our tech for military purposes with the government… but you can’t.”

Advancement #3: Two Data Licensing Deals This Week That You Should Know About

EyeEm

EyeEm, the photo-sharing platform often called Europe's Instagram, recently announced they will start using users’ photos to train AI unless users opt out by deleting their content within 30 days. With a massive library of 160 million images and nearly 150,000 users, this change was communicated through an email that introduced a new clause in the Terms & Conditions.

“By uploading Content to EyeEm Community, you grant us regarding your Content the non-exclusive, worldwide, transferable and sublicensable right to reproduce, distribute, publicly display, transform, adapt, make derivative works of, communicate to the public and/or promote such Content. This specifically includes the sublicensable and transferable right to use your Content for the training, development and improvement of software, algorithms and machine learning models. You can request the deletion of your Content at any time. The conditions for this can be found in section 13.”

But Section 13 of EyeEm’s new terms outlines a rather complicated deletion process that starts with users manually removing photos one at a time. Users must email support@eyeem.com, providing all individual Content ID numbers and specifying whether the photos should be removed from just their own account or the EyeEm Market as well.

The catch? EyeEm stated that the deletions can take as long as 180 days to process, yet users are given only 30 days to opt out of the new data licensing terms. Thus, the only way to effectively delete their photos is to go one by one.

Stack Overflow

Meanwhile Stack Overflow, the #1 developer community to seek answers, has finalized a deal with OpenAI to enhance AI's ability to respond to development queries. A recent poll showed that 44% of developers are already using AI for some coding tasks, with an additional 26% planning to start soon. Following a noticeable drop in platform traffic after the rise of advanced AI models last year, Stack Overflow is seeking to leverage its vast data pool through licensing agreements to recapture its financial footing. The details of the financial arrangement, however, remain uncertain.

My Initial Thoughts: EyeEm new licensing clause is almost a mere image of the one Meta has, though EyeELM was significantly more transparent with what they are planning on doing with all of their content. But the truth is, they are also making it impossible for users to opt out.

Advancement #4: Quick Speed Round

#1: X will now be using Grok to power a feature in the app that summarizes a user’s personalized trending stories. Called Grok’s Stories, the new feature will be available to Premium subscribers where they will be able to read a summary of posts on X associated with each trending story on the “For You” tab.

#2: Anthropic is introducing a new paid enterprise plan, named "Team," aimed at sectors such as healthcare, finance, and legal, alongside launching a new iOS app. The Team plan offers customers prioritized access to Anthropic’s Claude 3 AI models, early access to new features, and enhanced admin controls for $30 per user per month.

#3: Microsoft has created an artificial intelligence model designed for use by American intelligence agencies. It’s an "air-gapped" environment, entirely isolated from the internet and making it the first time a large language model has operated independently of the online world. Microsoft aims to provide a highly secure system to the US intelligence community with this innovation.

At a security conference, the assistant director of the CIA Shateesh Patel stated that “There is a race to get generative AI onto intelligence data.” She stated that the first country to use generative AI for their intelligence would win that race, and “And I want it to be us.”

My Initial Thoughts: Remember my joke when I said Microsoft’s ban on use in military/government purposes was so that they could shut out the competition? Remember that.